We have worked closely with Cisco for many years in large complex environments and have developed integrations to support a variety of Cisco solutions for our joint customers. In recent years we have seen an increased interest in the use of Cisco Meraki devices by enterprises that are also AlgoSec customers. In this post , we will highlight some of the AlgoSec capabilities that can quickly add value for Meraki customers.

Meeting the Enterprise

The Cisco Meraki MX is a multifunctional security and SD-WAN enterprise appliance with a wide set of capabilities to address multiple use cases—from an all-in-one device. Organizations across all industries rely on the MX to deliver secure connectivity to hub locations or multicloud environments. The MX is 100% cloud managed, so installation and remote management is truly zero touch, making it ideal for distributed branches, campuses, and data center locations.

In our talks with AlgoSec customers and partner architects, it is evident that the benefits which originally made Meraki MX popular in commercial deployments were just as appealing to enterprises. Many enterprises are now faced with waves of expansion in employees working from home, and burgeoning demands for scalable remote access – along with increasing network demands by regional centers. The leader of one security team I spoke with put it very well, “We are deploying to 1,200 locations in four global regions, planned to be 1,500 by year’s end. The choice of Meraki is for us a ‘no-brainer.’ If you haven’t already, I know that you’re going to see this become a more popular option with many big operations.”

Natural Companions – AlgoSec ASMS and Cisco Meraki-MX

This is a natural situation to meet enhanced requirements with AlgoSec ASMS — reinforcing Meraki’s impressive capabilities and scale as a combined, enterprise-class solution. ASMS brings to the table traffic planning and visualization, rules optimization and management, and a solution to address enterprise-level requirements for policy reporting and compliance auditing.

In AlgoSec, we’re proud of AlgoSec FireFlow’s ability to model the security-connected state of any given endpoints across an entire enterprise. Now our customers with Meraki MX can extend this technology that they know and trust, analyze real traffic in complex deployments, and acquire an understanding of the requirements and impact of changes delivered to their users and applications that are connected by Meraki deployments.

As it’s unlikely that your needs, or those of any data center and enterprise, are met by a single vendor and model, AlgoSec unifies operations of the Meraki-MX with those of the other technologies, such as enterprise NGFW and software-defined network fabrics. Our application-centric approach means that Meraki MX can be a component in delivering solutions for zero-trust and microsegmentation with other Cisco technology like Cisco ACI, and other third parties.

Cisco Meraki– Product Demo

If all of this sounds interesting, take a look for yourself to see how AlgoSec helps with common challenges in these enterprise environments.

More Where This Came From

The AlgoSec integration with Cisco Meraki-MX is delivering solutions our customers want. If you want to discover more about the Meraki and AlgoSec joint solution, contact us at AlgoSec! We work together with Cisco teams and resellers and will be glad to schedule a meeting to share more details or walk through a more in depth demo.

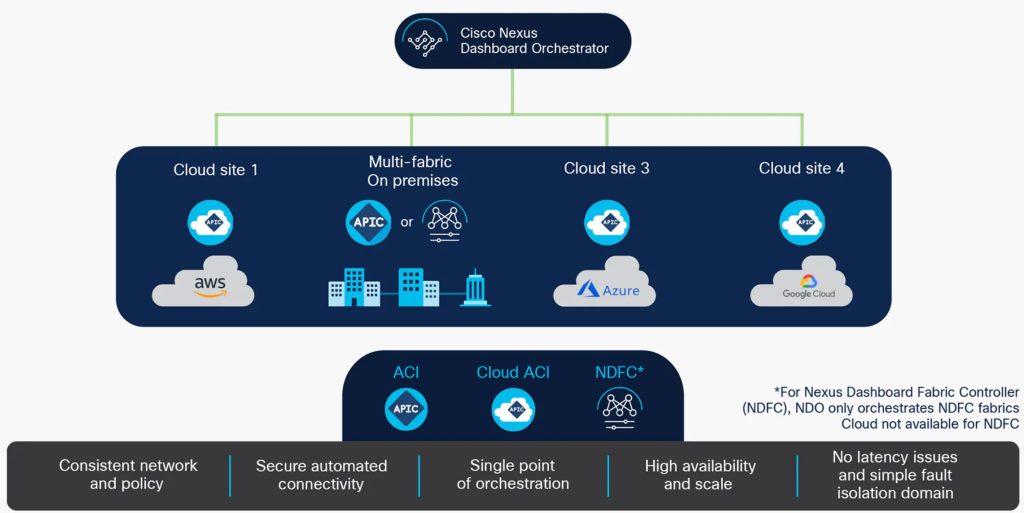

AlgoSec ASMS A32.6 is our latest release to feature a major technology integration, built upon our well-established collaboration with Cisco — bringing this partnership to the front of the Cisco innovation cycle with support for Nexus Dashboard Orchestrator (NDO). NDO allows Cisco ACI – and legacy-style Data Center Network Management – to operate at scale in a global context, across data center and cloud regions. The AlgoSec solution with NDO brings the power of our intelligent automation and software-defined security features for ACI, including planning, change management, and microsegmentation, to this global scope. I urge you to see what AlgoSec delivers for ACI with multiple use cases, enabling application-mode operation and microsegmentation, and delivering integrated security operations workflows. AlgoSec now brings support for Shadow EPG and Inter-Site Contracts with NDO, to our existing ACI strength.

I had my first encounter with Cisco Application Centric Infrastructure in 2014 at a Symantec Vision conference. The original Senior Product Manager and Technical Marketing lead were hosting a discussion about the new results from their recent Insieme acquisition and were eager to onboard new partners with security cases and added operations value. At the time I was promoting the security ecosystem of a different platform vendor, and I have to admit that I didn’t fully understand the tremendous changes that ACI was bringing to security for enterprise connectivity. It’s hard to believe that it’s now seven years since then and that Cisco ACI has mainstreamed software-defined networking — changing the way that network teams had grown used to running their networks and devices since at least the mid-’90s.

Since that 2014 introduction, Cisco’s ACI changed the landscape of data center networking by introducing an intent-based approach, over earlier configuration-centric architecture models. This opened the way for accelerated movement by enterprise data centers to meet their requirements for internal cloud deployments, new DevOps and serverless application models, and the extension of these to public clouds for hybrid operation – all within a single networking technology that uses familiar switching elements. Two new, software-defined artifacts make this possible in ACI: End-Point Groups (EPG) and Contracts – individual rules that define characteristics and behavior for an allowed network connection.

That’s really where NDO comes into the picture. By now, we have an ACI-driven data center networking infrastructure, with management redundancy for the availability of applications and preserving their intent characteristics. Through the use of an infrastructure built on EPGs and contracts, we can reach from the mobile and desktop to the datacenter and the cloud. This means our next barrier is the sharing of intent-based objects and management operations, beyond the confines of a single data center. We want to do this without clustering types, that depend on the availability risk of individual controllers, and hit other limits for availability and oversight.

Instead of labor-intensive and error-prone duplication of data center networks and security in different regions, and for different zones of cloud operation, NDO introduces “stretched” shadow EPGs, and inter-site contracts, for application-centric and intent-based, secure traffic which is agnostic to global topologies – wherever your users and applications need to be.

NDO Deployment Topology – Image: Cisco

Having added NDO capability to the formidable shared platform of AlgoSec and Cisco ACI, regional-wide and global policy operations can be executed in confidence with intelligent automation. AlgoSec makes it possible to plan for operations of the Cisco NDO scope of connected fabrics in application-centric mode, unlocking the ACI super-powers for micro-segmentation. This enables a shared model between networking and security teams for zero-trust and defense-in-depth, with accelerated, global-scope, secure application changes at the speed of business demand — within minutes, rather than days or weeks.

Change management: For security policy change management this means that workloads may be securely re-located from on-premises to public cloud, under a single and uniform network model and change-management framework — ensuring consistency across multiple clouds and hybrid environments.

Visibility: With an NDO-enabled ACI networking infrastructure and AlgoSec’s ASMS, all connectivity can be visualized at multiple levels of detail, across an entire multi-vendor, multi-cloud network. This means that individual security risks can be directly correlated to the assets that are impacted, and a full understanding of the impact by security controls on an application’s availability.

Risk and Compliance: It’s possible across all the NDO connected fabrics to identify risk on-premises and through the connected ACI cloud networks, including additional cloud-provider security controls. The AlgoSec solution makes this a self-documenting system for NDO, with detailed reporting and an audit trail of network security changes, related to original business and application requests. This means that you can generate automated compliance reports, supporting a wide range of global regulations, and your own, self-tailored policies.

Cisco NDO is a major technology and AlgoSec is in the early days with our feature introduction, nonetheless, we are delighted and enthusiastic about our early adoption customers. Based on early reports with our Cisco partners, needs will arise for more automation, which would include the “zero-touch” push for policy changes – committing Shadow EPG and Inter-site Contract changes to the orchestrator, as we currently do for ACI APIC. Feedback will also shape a need for automation playbooks and workflows that are most useful in the NDO context, and that we can realize with a full committable policy by the ASMS Firewall Analyzer.

Contact Us!

I encourage anyone interested in NDO and enhancing their operational maturity in aligned network and security operation, to talk to us about our joint solution. We work together with Cisco teams and resellers and will be glad to share more.

All organizations will experience added pressure during the holiday season, but the biggest impact will be felt by online retailers. Black Friday and Cyber Monday, which take place at the end of November, have seen a steady growth over the last few years. In 2020, Cyber Monday garnered $10.8bn, making it the biggest eCommerce day in US history. Black Friday, on the other hand, saw an online spending increase of about 22% YoY to around $9 billion.

Tackling the surge in website traffic and ensuring servers don’t fail is only half the challenge for eCommerce organizations. The flood of visitors could mask a more sinister problem with far greater repercussions, both financially and reputationally.

It is meant to be the most joyous time of year, but for many the festive period around Thanksgiving and Christmas can be the most challenging when it comes to cybersecurity. Cybercrime is a chronic problem that is evolving as our shopping behaviors change. The pandemic and subsequent increase in online shopping has given rise to various forms of attack methods such as card-not-present (CNP) crime, where a customer pays for goods without physically showing their credit card to the merchant. According to Juniper Research, retailers could lose $130bn globally in CNP fraud by 2023. Other common techniques including phishing, DDoS attacks or SQL injection.

eCommerce retailers need to take online security seriously. They cannot afford to risk their customers’ data or reputation. While it’s almost impossible to keep every hacker out, there are tactics you can employ to avoid a large-scale takeover. Here are five ways you can ward off cyberattacks this holiday season:

If you work in eCommerce and want some advice on how you can make this time of year full of cheer, speak to one of the team or arrange a personal demo here.

NSX-T culminates VMware’s decade of development of these technologies, that better align than ever before with AlgoSec’s approach for software automation of micro-segmentation and compliant security operations management.

It is the latest iteration of VMware’s approach to networking and security, derived from many years as a platform for operating virtual machines, and managing these as hosted “vApp” workloads. If you’re familiar with the main players in Software Defined Networking, then you may remember that NSX-T shares its origin in the same student research at Stanford University, which also gave rise to several other competing SDN offerings.

One thing that differentiated VMware from other players was their strong focus on virtualization over traditional network equipment stacks. This meant in some cases, network connections, data-packets, forwarding, and endpoints all existing in software and no “copper wire” existing anywhere! Knowing about this difference is more than a bit of trivia — it explains how the NSX family was designed with security features built into the architecture, having native capability for software security controls such as firewall segmentation and packet inspection. Described by VMware as “Intrinsic Security,” these are NSX capabilities that first drove the widespread acceptance of practical micro-segmentation in the data center.

Since that first introduction of NSX micro-segmentation, a transformation occurred in customer demands, which required an expansion of VMware’s universe to horizons beyond their hypervisor and virtual machines. As a key enabler for this expansion, NSX-T has emerged as a networking and security technology that extends from serverless micro-services and container frameworks to VMs hosted on many cloud architectures located in physical data centers or as tenants in public clouds. The current iteration is called the NSX-T Service-Defined Firewall, which controls access to applications and services along with business-focused policies.

If you’ve followed this far along, then maybe you’ve recognized several common themes between AlgoSec’s Security Management Suite and VMware’s NSX-T. Among these are security operations management as software configuration, modeling connectivity on business uses versus technology conventions, and transforming security into an enabling function. It’s not a surprise then, to know that our companies are technology partners.

In fact, we began our alliance with VMware back in 2015 as the uptake in NSX micro-segmentation began to reveal an increased need for visibility, planning, automation, and reporting — along with requirements for extending policy from NSX objects to attached physical security devices from a variety of vendors.

The sophistication and flexibility of NSX enforcement capability were excellently matched by the AlgoSec strengths in identifying risk and maintaining compliance while sustaining a change management record of configurations from our combined workflow automation.

Up until now, this is a rosy picture painted, with an emphasis on the upsides of the AlgoSec partnership with VMware NSX-T. In the real world, we find that many of our applications are not-so-well understood as to be ready for micro-segmentation. More often, the teams responsible for the availability and security of these applications are detached from the business intent and value, further making it difficult to assess and therefore address risks. The line between traditional-style infrastructure and modern services isn’t always as clearly defined, either — making the advantages possible by migration and transformation difficult to determine and potentially introducing their own risks. It is in these environments, with multiple technologies, different stakeholders, and operation teams with different scopes, that AlgoSec solves hard problems with better automation tools.

Taking advantage of NSX-T means first being faced with multiple deployment types, including public and private clouds as well as on-prem infrastructure, multiple security vendors, unclear existing network flows, and missing associations between business applications and their existing controls. These are visibility issues that AlgoSec resolves by automating the discovery and mapping of business applications, including associated policies across different technologies, and producing visual, graphic analysis that includes risk assessment and impact of changes.

This capability for full visibility leads directly to addressing the open issues for risk and compliance. After all, if these present challenges in discovering and identifying risk using existing technology solutions, then there’s a big gap to close on the way to transforming these. Since AlgoSec has addressed the visibility across these, identifying risk becomes uniform and manageable. AlgoSec can lower transformation risk with NSX-T while ensuring that risk and compliance management are maintained on an ongoing basis. Workflow for risk mitigation by NSX-T intrinsic security can be driven by AlgoSec policy automation, without recourse to multiple tools when these mitigations need to cross boundaries to third-party firewalls or cloud security controls. With this integrated policy automation, what were once point-in-time configurations can be enabled for discovery-based updates for internal standards and changes to regulatory mandates.

The result of AlgoSec pairing with VMWare NSX-T is a simplified overall security architecture — one that more rapidly responds to emerging risk and requests for changes, accelerates the speed of operations while more closely aligning with business, and ensures both compliant configurations and compliant lifecycle operations.

The AlgoSec integration with VMware NSX-T builds on our years of collaboration with earlier versions of the NSX platform, with a track record of solving the more difficult configuration management problems for leaders of principal industries around the globe.

If you want to discover more about what AlgoSec does to enable and enrich our alliance solution with VMware, contact us! AlgoSec works directly with VMware and your trusted technology delivery partners, and we’re glad to share more with you. Schedule a personal demo to see how AlgoSec makes your transformation to VMware Intrinsic Security possible now.

I’ve noticed something over the past few years discussing security with our technology partners. Even today, in the general adoption phase of the Cloud Era, there are still many organizations with significant ‘legacy’ infrastructure embedded in their data centers and network operations. You might be surprised by who some of these are – it’s probably not who you imagine. Some have an already established footprint in hybrid cloud services or even operate entire parts of their business with publicly hosted cloud providers. Others understand the technology and business advantages of this next generation of application and hosting architectures — and are engaged in multi-year projects to affect these transitions. So, even forward-leaning IT organizations straddle gaps between modernization and established legacy infrastructure, particularly with their internal, switched networks built on Cisco Nexus 7000 or even earlier generations. This comes at a cost of operations complexity and duplication of efforts — a theme that is repeated often in these conversations.

The IT leadership of a company still operating Cisco Nexus 7000 in traditional-style 3-tier or 2-tier ‘leaf-spine’ switched networks hasn’t ignored the advantages of new, software-defined logical fabrics like Cisco ACI. The reason for maintaining these legacies isn’t the same in every case but commonly mentioned is an inability to prioritize budget and investments for on-premises solutions vs. mandates for hosted clouds. Another repeated story is the challenges of migrating data segmentation policy from previous Nexus 7000 or even Nexus 5000 configurations to software-defined fabric operations. The barriers aren’t because there’s not a technology pathway or lack of ACI capabilities — it more often rests in the very large number of existing applications which must be maintained for availability, security, and compliance. Even well-managed IT operators have a poor understanding of older multi-tier application deployments in their custodial worlds. Migrating to a newer, cloud-friendly network platform is a risk for these data center operators. As they remain tasked with a mission to maintain existing capabilities for ‘unknown applications’, they settle for the known costs – and risks – in these legacy Nexus solutions. This situation, with split horizons for operation and different management tools, may make their ability to reach a future desired state ever harder to realize over time.

For many, this consideration of data center legacy is very familiar territory. If you operate environments built on Cisco Nexus 7000 devices, you’ve already been inundated with end-of-life warnings and messages about the benefits of upgrading to Nexus 9000 driven by Cisco ACI as your application-centric cloud management fabric. So, you may already understand the benefits of modernizing your network, embracing digital transformation for these on-premises deployments, while extending a single architecture into the cloud.

Because of the partnership between Cisco and AlgoSec, you now have a solution for taking full advantage of the power of Cisco Nexus and Cisco ACI. This is achievable without abandoning the management of your traditional application networking, or by subjecting your organization to new operations risks — and now you could add a whole new dimension to these, realizing higher ROI by also managing your network security.

Modernizing your legacy network using Nexus 9000 and the AlgoSec Security Management Suite empowers a secure digital transformation so you can cover your entire networking needs, including the configurations served by well-established legacy Nexus environments. AlgoSec’s capabilities complement and expand those of Nexus 9000 — and coupled with Cisco ACI they provide full visibility into your entire hybrid multi-vendor network, network security policy automation, compliance, and security policy enforcement.

With AlgoSec ASMS, the switch-segment policies and secure networking configurations can be migrated from Nexus (legacy or 9000) to Cisco ACI in application-centric mode — providing improved agility and manageability, along with new capabilities for risk and compliance. The integration of Cisco ACI with the AlgoSec Security Management Suite is a complete solution, providing your organization with full visibility, visualization, and automation for the connected security of your entire network. “Entire network” extends beyond the on-premises legacy, extending to multi-vendor and cloud management operations, with advanced change management and detailed reporting capabilities. Our partner solution unlocks Cisco ACI’s potential by providing full visibility, automation, compliance, and micro-segmentation capabilities from AlgoSec.

With our joint solution, your organization will be enabled by software-defined security for a software-defined network — one that embraces continuity for your entire multi-vendor, hybrid deployment. Through the unifying AlgoSec workflow for NetOps and SecOps, security policy changes can be implemented automatically on your network through zero-touch automation. This solution’s intelligent automation workflow automatically pushes security policy changes to your entire network and enables automated deployment of contracts, EPGs, and filters to Cisco ACI controllers. Connectivity can also be modeled, then deployed at the business application level. This enables companies like yours to use a single process for the deployment of applications and security policies across their entire data center, both in the cloud and those you are hosting for your organization.

AlgoSec is your first, best contact for legacy modernization with Cisco 9000, and will take lead with your existing Cisco relationship leaders, to retire data center legacy with aligned network and security operations. Talk to us at AlgoSec about our Cisco joint solution, together with your Cisco team and reseller. Convinced that it is time to harness the full power of migrating to Nexus 9000? Schedule a personal demo to see how AlgoSec makes the transition flawless.

Compliance standards come in many different shapes and sizes. Some organizations set their own internal policies, while others are subject to regimented global frameworks such as PCI DSS, which protects customers’ card payment details; SOX to safeguard financial information or HIPAA, which protects patients’ healthcare data.

Regardless of which industry you operate in, regular auditing is key to ensuring your business retains its risk posture whilst also remaining compliant. The problem is that running manual risk and security audits can be a long, drawn-out, and tedious affair. A 2020 report from Coalfire and Omdia found that for the majority of organizations, growing compliance obligations are now consuming 40% or more of IT security budgets and threaten to become an unsustainable cost.

The report suggests two reasons for this growing compliance burden. First, compliance standards are changing from point-in-time reviews to continuous, outcome-based requirements. Second, the ongoing cyber-skills shortage is stretching organizations’ abilities to keep up with compliance requirements. This means businesses tend to leave them until the last moment, leading to a rushed audit that isn’t as thorough as it could be, putting your business at increased risk of a penalty fine or, worse, a data breach that could jeopardize the entire organization.

The auditing process itself consists of a set of requirements that must be created for organizations to measure themselves against. Each rule must be manually analyzed and simulated before it can be implemented and used in the real world. As if that wasn’t time-consuming enough, every single edit to a rule must also be logged meticulously. That is why automation plays a key role in the auditing process. By striking the right balance between automated and manual processes, your business can achieve continuous compliance and produce audit reports seamlessly.

Here is a six-step strategy that can set your business on the path to sustainable and successful ongoing auditing preservation:

This step will be the most arduous but once completed it will become much easier to sustain. This is when you’ll need to gather things like security policies, firewall access logs, documents from previous audits and firewall vendor information – effectively everything you’d normally factor into a manual security audit.

A good change management process is essential to ensure traceability and accountability when it comes to firewall changes. This process should confirm that every change is properly authorized and logged as and when it occurs, providing a picture of historical changes and approvals.

With the pandemic causing a surge in the number of remote workers and devices used, businesses must take extra care to certify that every endpoint is secured and up-to-date with relevant security patches. Crucially, firewall and management services should also be physically protected, with only designated personnel permitted to access them.

As with every process, the tidier it is, the more efficient it is. Document rules and naming conventions should be enforced to ensure the rule base is as organized as possible, with identical rules consolidated to keep things concise.

Now it’s time to assess each rule and identify those that are particularly risky and prioritize them by severity. Are there any that violate corporate security policies? Do some have “ANY” and a permissive action? Make a list of these rules and analyze them to prepare plans for remediation and compliance.

Now it’s time to simply hone the first five steps and make these processes as regular and streamlined as possible.

By following the above steps and building out your own process, you can make day-to-day compliance and auditing much more manageable. Not only will you improve your compliance score, you’ll also be able to maintain a sustainable level of compliance without the usual disruption and hard labor caused by cumbersome and expensive manual processes.

To find out more about auditing automation and how you can master compliance, watch my recent webinar and visit our firewall auditing and compliance page.

The IT landscape has changed beyond recognition in the past decade or so. The vast majority of businesses now operate largely in the cloud, which has had a notable impact on their agility and productivity. A recent survey of 1,900 IT and security professionals found that 41 percent or organizations are running more of their workloads in public clouds compared to just one-quarter in 2019. Even businesses that were not digitally mature enough to take full advantage of the cloud will have dramatically altered their strategies in order to support remote working at scale during the COVID-19 pandemic.

However, with cloud innovation so high up the boardroom agenda, security is often left lagging behind, creating a vulnerability gap that businesses can little afford in the current heightened risk landscape. The same survey found the leading concern about cloud adoption was network security (58%).

Managing organizations’ networks and their security should go hand-in-hand, but, as reflected in the survey, there’s no clear ownership of public cloud security. Responsibility is scattered across SecOps, NOCs and DevOps, and they don’t collaborate in a way that aligns with business interests. We know through experience that this siloed approach hurts security, so what should businesses do about it? How can they bridge the gap between NetOps and SecOps to keep their network assets secure and prevent missteps?

Today’s digital infrastructure demands the collaboration, perhaps even the convergence, of NetOps and SecOps in order to achieve maximum security and productivity. While the majority of businesses do have open communication channels between the two departments, there is still a large proportion of network and security teams working in isolation. This creates unnecessary friction, which can be problematic for service-based businesses that are trying to deliver the best possible end-user experience.

The reality is that NetOps and SecOps share several commonalities. They are both responsible for critical aspects of a business and have to navigate constantly evolving environments, often under extremely restrictive conditions. Agility is particularly important for security teams in order for them to keep pace with emerging technologies, yet deployments are often stalled or abandoned at the implementation phase due to misconfigurations or poor execution. As enterprises continue to deploy software-defined networks and public cloud architecture, security has become even more important to the network team, which is why this convergence needs to happen sooner rather than later.

We somehow need to insert the network security element into the NetOps pipeline and seamlessly make it just another step in the process. If we had a way to automatically check whether network connectivity is already enabled as part of the pre-delivery testing phase, that could, at least, save us the heartache of deploying something that will not work.

Thankfully, there are tools available that can bring SecOps and NetOps closer together, such as Cisco ACI, Cisco Secure Workload and AlgoSec Security Management Solution. Cisco ACI, for instance, is a tightly coupled policy-driven solution that integrates software and hardware, allowing for greater application agility and data center automation. Cisco Secure Workload (previously known as Tetration), is a micro-segmentation and cloud workload protection platform that offers multi-cloud security based on a zero-trust model.

When combined with AlgoSec, Cisco Secure Workload is able to map existing application connectivity and automatically generate and deploy security policies on different network security devices, such as ACI contract, firewalls, routers and cloud security groups. So, while Cisco Secure Workload takes care of enforcing security at each and every endpoint, AlgoSec handles network management. This is NetOps and SecOps convergence in action, allowing for 360-degree oversight of network and security controls for threat detection across entire hybrid and multi-vendor frameworks.

While the utopian harmony of NetOps and SecOps may be some way off, using existing tools, processes and platforms to bridge the divide between the two departments can mitigate the ‘silo effect’ resulting in stronger, safer and more resilient operations.

We recently hosted a webinar with Doug Hurd from Cisco and Henrik Skovfoged from Conscia discussing how you can bring NetOps and SecOps teams together with Cisco and AlgoSec. You can watch the recorded session here.

In order for businesses to succeed in 2021 and beyond, agility and responsiveness are critical. Data is the new currency, and businesses need to be able to access theirs instantly and securely in order to make the rapid-fire business decisions that will make or break their long-term strategies. One way IT can improve responsiveness and business agility is by moving business applications to the cloud.

When migrating applications to the cloud, the need for network security is often overlooked. When this happens, applications are deployed in the cloud without adequate security and compliance measures in place, or, conversely, the security team steps in and halts the migration process.

This puts the company at risk: inadequate security makes it easier for hackers to access the network and mount an attack against the company – exposing the company to financial losses and legal repercussions. Moreover, if the business is unable to respond to market demands in a timely fashion, there are clear financial implications.

Cloud migration comes with several benefits, but it also exposes a number of security risks. With every advantage comes a cost, and it is up to businesses to do whatever they can to mitigate that risk in order to leverage the cloud to its fullest potential.

An AlgoSec survey found that organizations reported a range of problems when migrating to public clouds, with 44% having difficulty managing security policies post-migration and nearly a third struggling to map application traffic flows at the start. Respondents also had concerns about applications in the cloud, with the greatest worry being cyberattacks (58%) and unauthorized access (53%) followed by applications outages and misconfigured cloud security controls.

But this shouldn’t stop progress from happening. What organizations need is security policy automation that supports the DevOps methodology, and more importantly the DevSecOps approach to building a security foundation. This solution needs to be able to automatically copy the firewall rules – and then make the necessary modifications to map rules to the new objects when the rules are applied to each new environment in the DevOps lifecycle. With the right automation solution, security can be baked into the entire process.

Obtaining an inventory of applications is a key requirement when migrating to the cloud. Most businesses have two types of applications – enterprise and departmental – and it should be relatively easy to obtain the necessary connectivity information needed to migrate them to the cloud. The key here is to know that these applications exist.

Once the list of applications is in place, you can move onto the next stage in the process of closing the security gap as you migrate to the cloud: identifying and sealing any vulnerabilities in the server that could be exploited by a hacker, understanding network connectivity requirements and application attributes, such as the number of servers, and associated business processes. These elements help determine the complexity involved in migrating applications.

Several attributes can affect the complexity of migrating an application to the cloud, including its specific connectivity requirements and the firewall rules that allow/ deny that connectivity. Mapping this connectivity provides a deeper understanding of network traffic which then provides insight into the flows you will need to migrate and maintain with the application in the cloud. The more applications that utilize a server, the harder it is to migrate an application which depends on that server. It may be necessary to migrate the server itself or migrate multiple applications at the same time.

So how do you generate documentation of application connectivity? The obvious choice is to employ a solution that automatically maps the various network traffic flows, servers, and firewall rules for each application. If you do not have access to such an automation solution, manually documenting, however tedious, will provide the necessary information.

The AlgoSec Security Management Suite (ASMS) makes it easy to support your cloud migration journey. Ensuring that it does not block critical business services and meet compliance requirements.

AlgoSec’s powerful AutoDiscovery capabilities help you understand the network flows in your organization. You can automatically connect the recognized traffic flows to the business applications that use them. AlgoSec seamlessly manages the network security policy across your entire hybrid network estate. AlgoSec proactively checks every proposed firewall rule change request against your network security strategy to ensure that the change doesn’t introduce risk or violate compliance requirements.

We have published a whitepaper that delves into the complexities of migrating to the cloud and the systematic approach that organizations should embrace when approaching these types of projects. You can download a copy here.

Cloud Security is a broad domain with many different aspects, some of them human. Even the most sophisticated and secure systems can be jeopardized by human elements such as mistakes and miscalculations. Many organizations are susceptible to such dangers, especially during critical tech configurations and transfers. Especially for example, during digital transformation and cloud migration may result in misconfigurations that can leave your critical applications vulnerable and your company’s sensitive data an easy target for cyber-attacks.

The good news is that Prevasio, and other cybersecurity providers have brought in new technologies to help improve the cybersecurity situation across multiple organizations. Today, we discuss Cloud Security Posture Management (CSPM) and how it can help prevent not just misconfigurations in cloud systems but also protect against supply chain attacks.

First, we need to fully understand what a CSPM is before exploring how it can prevent cloud security issues. CSPM is first of all a practice for adopting security best practices as well as automated tools to harden and manage the company security strength across various cloud based services such as Software as a Service (SaaS), Infrastructure as a Service (IaaS), and Platform as a Service (PaaS).

These practices and tools can be used to determine and solve many security issues within a cloud system. Not only is CSPM critical to the growth and integrity of your cloud infrastructure, but it’s also mandatory for organizations with CIS, GDPR, PCI-DSS, NIST, HIPAA and similar compliance requirements.

There are numerous cloud service providers such as AWS, Azure, Google Cloud, and others that provide hyper scaling cloud hosted platforms as well as various cloud compute services and solutions to organizations that previously faced many hurdles with their on-site cloud infrastructures. When you migrate your organization to these platforms, you can effectively scale up and cut down on on-site infrastructure spending.

However, if not appropriately handled, cloud migration comes with potential security risks. For instance, an average Lift and Shift transfer that involves a legacy application may not be adequately security hardened or reconfigured for safe use in a public cloud setup. This may result in security loopholes that expose the network and data to breaches and attacks.

Cloud misconfiguration can happen in multiple ways. However, the most significant risk is not knowing that you are endangering your organization with such misconfigurations. That being the case, below are a few examples of cloud misconfigurations that can be identified and solved by CSPM tools such as Prevasio within your cloud infrastructure:

The above are a mere few examples of common misconfigurations that can be found in your cloud infrastructure, but CSPM can provide additional advanced security and multiple performance benefits.

CSPM manages your cloud infrastructure. Some of the benefits of having your cloud infrastructure secured with CSPM boils down to peace of mind, that reassurance of knowing that your organization’s critical data is safe.

It further provides long-term visibility to your cloud networks, enables you to identify violations of policies, and allows you to remediate your misconfigurations to ensure proper compliance. Furthermore, CSPM provides remediation to safeguard cloud assets as well as existing compliance libraries

Technology is here to stay, and with CSPM, you can advance the cloud security posture of your organization. To summarize it all, here are what you should expect with CSPM cloud security:

With automation sweeping every industry by storm, CSPM is the future of all-inclusive cloud security. With cloud security posture management, you can do more than remediate configuration issues and monitor your organization’s cloud infrastructure.

You’ll also have the capacity to establish cloud integrity from existing systems and ascertain which technologies, tools, and cloud assets are widely used. CSPM’s capacity to monitor cloud assets and cyber threats and present them in user-friendly dashboards is another benefit that you can use to explore, analyze and quickly explain to your team(s) and upper management. Even find knowledge gaps in your team and decide which training or mentorship opportunities your security team or other teams in the organization might require.

At the moment, cloud security is a new domain that its need and popularity is growing by the day. CSPM is widely used by organizations looking to maximize in a safe way the most of all that hyper scaling cloud platforms can offer, such as agility, speed, and cost-cutting strategies. The downside is that the cloud also comes with certain risks, such as misconfigurations, vulnerabilities and internalexternal supply chain attacks that can expose your business to cyber-attacks.

CSPM is responsible for protecting users, applications, workloads, data, apps, and much more in an accessible and efficient manner under the Shared Responsibility Model. With CSPM tools, any organization keen on enhancing its cloud security can detect errors, meet compliance regulations, and orchestrate the best possible defenses.

Prevasio’s Next-Gen CSPM solution focus on the three best practices: light touchagentless approach, super easy and user-friendly configuration, easy to read and share security findings context, for visibility to all appropriate users and stakeholders in mind. Our cloud security offerings are ideal for organizations that want to go beyond misconfiguration, legacy compliance or traditional vulnerability scanning.

We offer an accelerated visual assessment of your cloud infrastructure, perform automated analysis of a wide range of cloud assets, identify policy errors, supply-chain threats, and vulnerabilities and position all these to your unique business goals.

What we provide are prioritized recommendations for well-orchestrated cloud security risk mitigations. To learn more about us, what we do, our cloud security offerings, and how we can help your organization prevent cloud infrastructure attacks, read all about it here.

Before I became a Sale Engineer I started my career working in operations and I don’t remember the first time I heard the term zero trust but I all I knew is that it was very important and everyone was striving to get to that level of security. Today I’ll get into how AlgoSec can help achieve those goals, but first let’s have a quick recap on what zero trust is in the first place. There are countless whitepapers and frameworks that define zero trust much better than I can, but they are also multiple pages long, so I’ll do a quick recap.

Traditionally when designing a network you may have different zones and each zone might have different levels of access. In many of these types of designs there is a lot of trust that is given once they are in a certain zone. For example, once someone gets to their workplace at the hospital, the nursing home, the dental center or any other medical office and does all the necessary authentication steps (proper company laptop, credentials, etc…) they potentially have free reign to everything. This is a very simple example and in a real-world scenario there would hopefully be many more safeguards in place. But what does happen in real world scenarios is that devices still manage to get trusted more than they should. And from my own experience and from working with customers this happens way too often.

Especially in the healthcare industry this is becoming more and more important. These days there are many different types of medical devices, some that hold sensitive information, some scanning instruments, and some that might even be critical to patient support. More importantly many are connected to some type of network. Because of this level of connectivity, we do need to start shifting toward this idea of zero trust. In healthcare cybersecurity isn’t just a matter of maintaining the network, it’s about maintaining the critical operations of the hospitals running smoothly and patient data safe and secure.

Maintaining security policies is critical to achieving zero trust. Below you can see some of the key features that AlgoSec has that can help achieve that goal.

|

Feature |

Description |

|

Security Policy Analysis |

Analyze existing security policy sets across all parts of the network (on-premises and cloud) with various vendors. |

|

Policy Cleanup |

Identify and remove redundant rules, duplicate rules, and more from the first report. |

|

Specific Recommendations |

Over time, recommendations become more specific, such as identifying unnecessary rules (e.g., a printer talking to a medical device without actual use). |

|

Application Perspective |

Tie firewall rules to actual applications to understand the business function they support, leading to more targeted security policies. |

|

Granularity & Visibility |

Higher level of visibility and granularity in security policies, focusing on specific application flows rather than broad network access. |

|

Security Posture by Application |

View and assess security risks and vulnerabilities at the application level, improving overall security posture. |

One of my favorite aspects of the AlgoSec platform is that we not only help optimize your security policies, but we also start to look at security from an application perspective. Traditionally, firewall change requests come in and it’s just asking for very specific things, “Source A to Destination B using Protocol C.” But using AlgoSec we tie those rules to actual applications to see what business function this is supporting. By knowing the specific flows and tying them to a specific application this allows us to keep a closer eye on the actual security policies we need to create. This helps with that zero trust journey because having that higher level of visibility and granularity helps to keep the rules more specific. Instead of a change request coming in that is allowing wide open access between two subnets the application can be designed for only the access that is required. It also allows for an overall better view of the security posture.

Zero trust, like many other ideas and frameworks in our industry might seem farfetched at first. We ask ourselves, how do we get there or how do we implement without it becoming so cumbersome that we give up on it. I think it’s normal to be a bit pessimistic about achieving the goal and it’s completely fine to look at some projects as moving targets that we might not have a hard deadline on. There usually isn’t a magic bullet that accomplish our goals, especially something like achieving zero trust. Multiple initiatives and projects are necessary. With AlgoSec’s expertise in application connectivity and policy management, we can be a key partner in that journey.

Every business needs to manage risks. If not, they won’t be around for long. The same is true in cloud computing. As more companies move their resources to the cloud, they must ensure efficient risk management to achieve resilience, availability, and integrity.

Yes, moving to the cloud offers more advantages than on-premise environments. But, enterprises must remain meticulous because they have too much to lose.

For example, they must protect sensitive customer data and business resources and meet cloud security compliance requirements.

The key to these – and more – lies in cloud risk management. That’s why in this guide, we’ll cover everything you need to know about managing enterprise risk in cloud computing, the challenges you should expect, and the best ways to navigate it.

If you stick around, we’ll also discuss the skills cloud architects need for risk management.

In cloud computing, risk management refers to the process of identifying, assessing, prioritizing, and mitigating the risks associated with cloud computing environments.

It’s a process of being proactive rather than reactive. You want to identify and prevent an unexpected or dangerous event that can damage your systems before it happens.

Most people will be familiar with Enterprise Risk Management (ERM). Organizations use ERM to prepare for and minimize risks to their finances, operations, and goals.

The same concept applies to cloud computing.

Cyber threats have grown so much in recent years that your organization is almost always a target. For example, a recent report revealed 80 percent of organizations experienced a cloud security incident in the past year.

While cloud-based information systems have many security advantages, they may still be exposed to threats. Unfortunately, these threats are often catastrophic to your business operations.

This is why risk management in cloud environments is critical.

Through effective cloud risk management strategies, you can reduce the likelihood or impact of risks arising from cloud services.

Managing risks is a shared responsibility between the cloud provider and the customer – you. While the provider ensures secure infrastructure, you need to secure your data and applications within that infrastructure.

Some types of risks organizations face in cloud environments are:

But risk assessment and management aren’t always straightforward. You will face certain challenges – and we’ll discuss them below:

Most organizations often face difficulties when managing cloud or third-party/vendor risks. These risks are particularly associated with the challenges that cloud deployments and usage cause.

Understanding the cloud security challenges sheds more light on your organization’s potential risks.

Cloud security is complex, particularly for enterprises. For example, many organisations leverage multi-cloud providers.

They may also have hybrid environments by combining on-premise systems and private clouds with multiple public cloud providers.

You’ll admit this poses more complexities, especially when managing configurations, security controls, and integrations across different platforms.

Unfortunately, this means organizations leveraging the cloud will likely become dependent on cloud services.

So, what happens when these services become unavailable?

Your organisation may be unable to operate, or your customers can’t access your services.

Thus, there’s a need to manage this continuity and lock-in risks.

Cloud consumers have limited visibility and control. First, moving resources to the public cloud means you’ll lose many controls you had on-premises.

Cloud service providers don’t grant access to shared infrastructure. Plus, your traditional monitoring infrastructure may not work in the cloud.

So, you can no longer deploy network taps or intrusion prevention systems (IPS) to monitor and filter traffic in real-time. And if you cannot directly access the data packets moving within the cloud or the information contained within them, you lack visibility or control.

Lastly, cloud service providers may provide logs of cloud workloads. But this is far from the real deal. Alerts are never really enough. They’re not enough for investigations, identifying the root cause of an issue, and remediating it.

Investigating, in this case, requires access to data packets, and cloud providers don’t give you that level of data.

It can be quite challenging to comply with regulatory requirements. For instance, there are blind spots when traffic moves between public clouds or between public clouds and on-premises infrastructures.

You can’t monitor and respond to threats like man-in-the-middle attacks. This means if you don’t always know where your data is, you risk violating compliance regulations.

With laws like GDPR, CCPA, and other privacy regulations, managing cloud data security and privacy risks has never been more critical.

Part of cloud risk management is understanding your existing systems and processes and how they work.

Understanding the requirements is essential for any service migration, whether it is to the cloud or not. This must be taken into consideration when evaluating the risk of cloud services. How can you evaluate a cloud service for requirements you don’t know?

Organizations struggle to have efficient cloud risk management during deployment and usage because of evolving risks.

Organizations often develop extensive risk assessment questionnaires based on audit checklists, only to discover that the results are virtually impossible to assess.

While checklists might be useful in your risk assessment process, you shouldn’t rely on them.

Here’s how efficient risk management in cloud environments looks like:

The first stage of every risk management – whether in cloud computing or financial settings – is identifying the potential risks.

You want to answer questions like, what types of risks do we face? For example, are they data breaches? Unauthorized access to sensitive data? Or are they service disruptions in the cloud?

The next step is analysis. Here, you evaluate the likelihood of the risk happening and the impact it can have on your organization. This lets you prioritize risks and know which ones have the most impact.

For instance, what consequences will a data breach have on the confidentiality and integrity of the information stored in the cloud?

Once risks are identified, it’s time to implement the right risk mitigation strategies and controls.

The cloud provider will typically offer security controls you can select or configure. However, you can consider alternative or additional security measures that meet your specific needs.

Some security controls and mitigation strategies that you can implement include:

Due to the frequency and complexity of cyber threats, authorities in various industries are releasing and updating recommendations for cloud computing. These requirements outline best practices that companies must adhere to avoid and respond to cyber-attacks.

This makes regulatory compliance an essential part of identifying and mitigating risks.

It’s important to first understand the relevant regulations, such as PCI DSS, ISO 27001, GDPR, CCPA, and HIPAA. Then, understand each one’s requirements. For example, what are your obligations for security controls, breach notifications, and data privacy?

Part of ensuring regulatory compliance in your cloud risk management effort is assessing the cloud provider’s capabilities.

Do they meet the industry compliance requirements? What are their previous security records? Have you assessed their compliance documentation, audit reports, and data protection practices?

Lastly, it’s important to implement data governance policies that prescribe how data is stored, handled, classified, accessed, and protected in the cloud.

Cloud risks are constantly evolving. This could be due to technological advancements, revised compliance regulations and frameworks, new cyber-treats, insider threats like misconfigurations, and expanding cloud service models like Infrastructure-as-a-Service (IaaS).

What does this mean for cloud computing customers like you?

There’s an urgent need to conduct regular security monitoring and threat intelligence to address emerging risks proactively.

It has to be an ongoing process of performing vulnerability scans of your cloud infrastructure. This includes log management, periodic security assessments, patch management, user activity monitoring, and regular penetration testing exercises.

Ultimately, there’s still a chance your organization will face cyber incidents. Part of cloud risk management is implementing cyber incident response plans (CIRP) that help contain threats.

Whether these incidents are low-level risks that were not prioritized or high-impact risks you missed, an incident response plan will ensure business continuity.

It’s also important to gather evidence through digital forensics and analyze system artifacts after incidents.

Implementing data backup and disaster recovery into your risk management ensures you minimize the impact of data loss or service disruptions. For example, backing up data and systems regularly is important.

Some cloud services may offer redundant storage and versioning features, which can be valuable when your data is corrupted or accidentally deleted.

Additionally, it’s necessary to document backup and recovery procedures to ensure consistency and guide architects.

Achieving cloud risk management involves combining the risk management processes above, setting internal controls, and corporate governance. Here are some best practices for effective cloud risk management:

1. Careful Selection of Your Cloud Service Provider (CSP)

Carefully select a reliable cloud service provider (CSP). You can do this by evaluating factors like contract clarity, ethics, legal liability, viability, security, compliance, availability, and business resilience. Note that it’s important to assess if the CSP relies on other service providers and adjust accordingly.

2. Establishing a Cloud Risk Management Framework

Consider implementing cloud risk management frameworks for a structured approach to identifying, assessing, and mitigating risks. Some notable frameworks include:

3. Collaboration and Communication with Stakeholders

You should always inform all stakeholders about potential risks, their impact, and incident response plans. A collaborative effort can improve risk assessment and awareness, help your organization leverage collective expertise, and facilitates effective decision-making against identified risks.

4. Implement Technical Safeguards

Deploying technical safeguards like cloud access security broker (CASB) in cloud environments can enhance security and protect against risks. CASB can be implemented in the cloud or on-premise and enforces security policies for users accessing cloud-based resources.

5. Set Controls Based on Risk Treatment

After identifying risks and determining your risk appetite, it’s important to implement dedicated measures to mitigate them. Develop robust data classification and lifecycle mechanisms and integrate processes that outline data protection, erasure, and hosting into your service-level agreements (SLA).

6. Employee Training and Awareness Programs

What’s cloud risk management without training personnel? At the crux of risk management is identifying potential threats and taking steps to prevent them. Insider threats and the human factor contribute significantly to threats today.

So, training employees on what to do to prevent risks during and after incidents can make a difference.

7. Adopt an Optimized Cloud Service Model

Choose a cloud service model that suits your business, minimizes risks, and optimizes your cloud investment cost.

8. Continuous Improvement and Adaptation to Emerging Threats

As a rule of thumb, you should always look to stay ahead of the curve. Conduct regular security assessments and audits to improve cloud security posture and adapt to emerging threats.

Implementing effective cloud risk management requires having skilled architects on board.

Through their in-depth understanding of cloud platforms, services, and technologies, these professionals can help organizations navigate complex cloud environments and design appropriate risk mitigation strategies.

The importance of prioritizing risk management in cloud environments cannot be overstated. It allows you to proactively identify risks, assess, prioritize, and mitigate them. This enhances the reliability and resilience of your cloud systems, promotes business continuity, optimizes resource utilization, and helps you manage compliance.

Do you want to automate your cloud risk assessment and management? Prevasio is the ideal option for identifying risks and achieving security compliance. Request a demo now to see how Prevasio’s agentless platform can protect your valuable assets and streamline your multi-cloud environments.

Cloud-native organizations need an efficient and automated way to identify the security risks across their cloud infrastructure. Sergei Shevchenko, Prevasio’s Co-Founder & CTO breaks down the essence of a CSPM and explains how CSPM platforms enable organizations to improve their cloud security posture and prevent future attacks on their cloud workloads and applications.

In 2019, Gartner recommended that enterprise security and risk management leaders should invest in CSPM tools to “proactively and reactively identify and remediate these risks”. By “these”, Gartner meant the risks of successful cyberattacks and data breaches due to “misconfiguration, mismanagement, and mistakes” in the cloud. So how can you detect these intruders now and prevent them from entering your cloud environment in future? Cloud Security Posture Management is one highly effective way but is often misunderstood.

There are many solid reasons for organizations to move to the cloud. Migrating from a legacy, on-premises infrastructure to a cloud-native infrastructure can lower IT costs and help make teams more agile. Moreover, cloud environments are more flexible and scalable than on-prem environments, which helps to enhance business resilience and prepares the organization for long-term opportunities and challenges.

That said, if your production environment is in the cloud, it is also prone to misconfiguration errors, which opens the firm to all kinds of security threats and risks. Think of this environment as a building whose physical security is your chief concern. If there are gaps in this security, for example, a window that doesn’t close all the way or a lock that doesn’t work properly, you will try to fix them on priority in order to prevent unauthorized or malicious actors from accessing the building.

But since this building is in the cloud, many older security mechanisms will not work for you. Thus, simply covering a hypothetical window or installing an additional hypothetical lock cannot guarantee that an intruder won’t ever enter your cloud environment. This intruder, who may be a competitor, enemy spy agency, hacktivist, or anyone with nefarious intentions, may try to access your business-critical services or sensitive data. They may also try to persist inside your environment for weeks or months in order to maintain access to your cloud systems or applications. Old-fashioned security measures cannot keep these bad guys out. They also cannot prevent malicious outsiders or worse, insiders from cryptojacking your cloud resources and causing performance problems in your production environment.

The main purpose of a CSPM is to help organizations minimize risk by providing cloud security automation, ensuring multi-cloud environments remain secure as they grow in scale and complexity. But, as organizations reach scale and add more complexity to their multi- cloud cloud environment, how can CSPMs help companies minimize such risks and better protect their cloud environments?

Think of a CSPM as a building inspector who visits the building regularly (say, every day, or several times a day) to inspect its doors, windows, and locks. He may also identify weaknesses in these elements and produce a report detailing the gaps. The best, most experienced inspectors will also provide recommendations on how you can resolve these security issues in the fastest possible time.

Similar to the role of a building inspector, CSPM provides organizations with the tools they need to secure your multi-cloud environment efficiently in a way that scales more readily than manual processes as your cloud deployments grow. Here are some CSPM key benefits:

Efficient early detection: A CSPM tool allows you to automatically and continuously monitor your cloud environment. It will scan your cloud production environment to detect misconfiguration errors, raise alerts, and even predict where these errors may appear next,

Responsive risk remediation: With a CSPM in your cloud security stack, you can also automatically remediate security risks and hidden threats, thus shortening remediation timelines and protecting your cloud environment from threat actors.

Consistent compliance monitoring: CSPMs also support automated compliance monitoring, meaning they continuously review your environment for adherence to compliance policies. If they detect drift (non-compliance), appropriate corrective actions will be initiated automatically.

Using the inspector analogy, it’s important to keep in mind that a CSPM can only act as an observer, not a doer. Thus, it will only assess the building’s security environment and call out its weakness. It won’t actually make any changes himself, say, by doing intrusive testing. Even so, a CSPM can help you prevent 80% of misconfiguration-related intrusions into your cloud environment. What about the remaining 20%? For this, you need a CSPM that offers something container scanning.

If your network is spread over a multi-cloud environment, an agentless CSPM solution should be your optimal solution. Here are three main reasons in support of this claim:

1. Closing misconfiguration gaps: It is especially applicable if you’re looking to eliminate misconfigurations across all your cloud accounts, services, and assets.

2. Ensuring continuous compliance: It also detects compliance problems related to three important standards: HIPAA, PCI DSS, and CIS. All three are strict standards with very specific requirements for security and data privacy. In addition, it can detect compliance drift from the perspectives of all three standards, thus giving you the peace of mind that your multi-cloud environment remains consistently compliant.

3. Comprehensive container scanning: An agentless CSPM can scan container environments to uncover hidden backdoors. Through dynamic behavior analyses, it can detect new threats and supply chain attack risks in cloud containers. It also performs container security static analyses to detect vulnerabilities and malware, thus providing a deep cloud scan – that too in just a few minutes.

Organizations transitioning to the cloud require robust security concepts to protect their most critical assets, including business applications and sensitive data. Rony Moshkovitch, Prevasio’s co-founder, explains these concepts and why reinforcing a DevSecOps culture would help organizations strike the right balance between security and agility.

In the post-COVID era, enterprise cloud adoption has grown rapidly. Per a 2022 security survey, over 98% of organizations use some form of cloud-based infrastructure. But 27% have also experienced a cloud security incident in the previous 12 months. So, what can organizations do to protect their critical business applications and sensitive data in the cloud?

It is in the organization’s best interest to allow developers to expedite the lifecycle of an application. At the same time, it’s the security teams’ job to facilitate this process in tandem with the developers to help them deliver a more secure application on time. As organizations migrate their applications and workloads to a multi-cloud platform, it’s incumbent to use a Shift left approach to DevSecOps. This enables security teams to build tools, and develop best practices and guidelines that enable the DevOps teams to effectively own the security process during the application development stage without spending time responding to risk and compliance violations issued by the security teams. This is where Paved Road, Guardrails and Least Privilege could add value to your DevSecOps.

Suppose your security team builds numerous tools, establishes best practices, and provides expert guidance. These resources enable your developers to use the cloud safely and protect all enterprise assets and data without spending all their time or energy on these tasks. They can achieve these objectives because the security team has built a “paved road” with strong “guardrails” for the entire organization to follow and adopt.

By following and implementing good practices, such as building an asset inventory, creating safe templates, and conducting risk analyses for each cloud and cloud service, the security team enables developers to execute their own tasks quickly and safely. Security staff will implement strong controls that no one can violate or bypass. They will also clearly define a controlled exception process, so every exception is clearly tracked and accountability is always maintained.

Over time, your organization may work with more cloud vendors and use more cloud services. In this expanding cloud landscape, the paved road and guardrails will allow users to do their jobs effectively in a security-controlled manner because security is already “baked in” to everything they work with. Moreover, they will be prevented from doing anything that may increase the organization’s risk of breaches, thus keeping you safe from the bad guys.

Example #1: Set Baked-in Security Controls

Remember to bake security into reusable Terraform templates or AWS CloudFormation modules of paved roads. You may apply this tactic to provision new infrastructure, create new storage buckets, or adopt new cloud services. When you create a paved road and implement appropriate guardrails, all your golden modules and templates are already secure from the outset – safeguarding your assets and preventing undesirable security events.

Example #2: Introducing Security Standardizations

When creating resource functions with built-in security standards, developers should adhere to these standards to confidently configure required resources without introducing security issues into the cloud ecosystem.

Example #3: Automating Security with Infrastructure as Code (IaC)

IaC is a way to manage and provision new infrastructure by coding specifications instead of following manual processes. To create a paved road for IaC, the security team can introduce tagging to provision and track cloud resources. They can also incorporate strong security guardrails into the development environment to secure the new infrastructure right from the outset.

The Principle of Least Privilege Access (PoLP) is often synonymous with Zero Trust. PoLP is about ensuring that a user can only access the resources they need to complete a required task. The idea is to prevent the misuse of critical systems and data and reduce the attack surface to decrease the probability of breaches.

Example #1: Ring-fencing critical assets

This is the process of isolating specific “crown jewel” applications so that even if an attacker could make it into your environment, they would be unable to reach that data or application. As few people as possible would be given credentials that allow access, therefore following least privilege access rules. Crown jewel applications could be anything from where sensitive customer data is stored, to business-critical systems and processes.

Example #2: Establishing Role Based Access Control (RABC)

Based on the role that they hold at the company, RBAC or role-based access control allows specific access to certain data or applications, or parts of the network. This goes hand in hand with the principle of least privilege, and means that if credentials are stolen, the attackers are limited to what access the employee in question holds. As this is based on users, you could isolate privileged user sessions specifically to keep them with an extra layer of protection. Only if an administrator account or one with wide access privilege is stolen, would the business be in real trouble.

Example 3#: Isolate applications, tiers, users, or data

This task is usually done with micro-segmentation, where specific applications, users, data, or any other element of the business is protected from an attack with internal, next-gen firewalls. Risk is reduced in a similar way to the examples above, where the requisite access needed is provided using the principle of least privilege to allow access to only those who need it, and no one else. In some situations, you might need to allow elevated privileges for a short period of time, for example during an emergency. Watch out for privilege creep, where users gain more access over time without any corrective oversight.

Paved Road, Guardrails and PoLP concepts are all essential for a strong cloud security posture. By adopting these concepts, your organization can move to the next stage of cloud security maturity and create a culture of security-minded responsibility at every level of the enterprise.

The Prevasio cloud security platform allows you to apply these concepts across your entire cloud estate while securing your most critical applications.

Organizations no longer keep their data in one centralized location. Users and assets responsible for processing data may be located outside the network, and may share information with third-party vendors who are themselves removed from those external networks.

The Zero Trust approach addresses this situation by treating every user, asset, and application as a potential attack vector whether it is authenticated or not. This means that everyone trying to access network resources will have to verify their identity, whether they are coming from inside the network or outside.

The Zero Trust approach is made up of six core concepts that work together to mitigate network security risks and reduce the organization’s attack surface.

Under the Zero Trust model, network administrators do not provide users and assets with more network access than strictly necessary. Access to data is also revoked when it is no longer needed. This requires security teams to carefully manage user permissions, and to be able to manage permissions based on users’ identities or roles.

The principle of least privilege secures the enterprise network ecosystem by limiting the amount of damage that can result from a single security failure. If an attacker compromises a user’s account, it won’t automatically gain access to a wide range of systems, tools, and workloads beyond what that account is provisioned for. This can also dramatically simplify the process of responding to security events, because no user or asset has access to assets beyond the scope of their work.

Zero trust policy assumes that there are attackers both inside and outside the network. To guarantee the confidentiality, integrity, and availability of network assets, it must continuously evaluate users and assets on the network. User identity and privileges must be checked periodically along with device identity and security.

Organizations accomplish this in a variety of ways. Connection and login time-outs are one way to ensure periodic monitoring and validation since it requires users to re-authenticate even if they haven’t done anything suspicious. This helps protect against the risk of threat actors using credential-based attacks to impersonate authenticated users, as well as a variety of other attacks.

Organizations undergoing the Zero Trust journey must carefully manage and control the way users interact with endpoint devices. Zero Trust relies on verifying and authenticating user identities separately from the devices they use. For example, Zero Trust security tools must be able to distinguish between two different individuals using the same endpoint device.

This approach requires fundamental changes to the way certain security tools work. For example, firewalls that allow or deny access to network assets based purely on IP address and port information aren’t sufficient. Most end users have more than one device at their disposal, and it’s common for mobile devices to change IP addresses. As a result, the cybersecurity tech stack needs to be able to grant and revoke permissions based on the user’s actual identity or role.

Network segmentation is a good security practice even outside the Zero Trust framework, but it takes on special significance when threats can come from inside and outside the network. Microsegmentation takes this one step further by breaking regular network segments down into small zones with their own sets of permissions and authorizations.

These microsegments can be as small as a single asset, and an enterprise data center may have dozens of separately secured zones like these. Any user or asset with permission to access one zone will not necessarily have access to any of the others. Microsegmentation improves security resilience by making it harder for attackers to move between zones.

Lateral movement is when threat actors move from one zone to another in the network. One of the benefits of micro segmentation is that threat actors must interact with security tools in order to move between different zones on the network. Even if the attackers are successful, their activities generate logs and audit trails that analysts can follow when investigating security incidents.